[ad_1]

Google Research

On Monday, a group of AI researchers from Google and the Technical University of Berlin unveiled PaLM-E, a multimodal embodied visual-language model (VLM) with 562 billion parameters that integrates vision and language for robotic control. They claim it is the largest VLM ever developed and that it can perform a variety of tasks without the need for retraining.

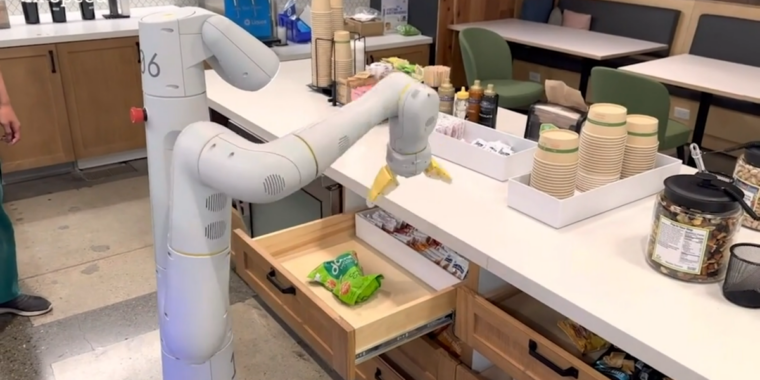

According to Google, when given a high-level command, such as “bring me the rice chips from the drawer,” PaLM-E can generate a plan of action for a mobile robot platform with an arm (developed by Google Robotics) and execute the actions by itself.

PaLM-E does this by analyzing data from the robot’s camera without needing a pre-processed scene representation. This eliminates the need for a human to pre-process or annotate the data and allows for more autonomous robotic control.

In a Google-provided demo video, PaLM-E executes “bring me the rice chips from the drawer,” which includes multiple planning steps as well as incorporating visual feedback from the robot’s camera.

It’s also resilient and can react to its environment. For example, the PaLM-E model can guide a robot to get a chip bag from a kitchen—and with PaLM-E integrated into the control loop, it becomes resistant to interruptions that might occur during the task. In a video example, a researcher grabs the chips from the robot and moves them, but the robot locates the chips and grabs them again.

In another example, the same PaLM-E model autonomously controls a robot through tasks with complex sequences that previously required human guidance. Google’s research paper explains how PaLM-E turns instructions into actions:

We demonstrate the performance of PaLM-E on challenging and diverse mobile manipulation tasks. We largely follow the setup in Ahn et al. (2022), where the robot needs to plan a sequence of navigation and manipulation actions based on an instruction by a human. For example, given the instruction “I spilled my drink, can you bring me something to clean it up?”, the robot needs to plan a sequence containing “1. Find a sponge, 2. Pick up the sponge, 3. Bring it to the user, 4. Put down the sponge.” Inspired by these tasks, we develop 3 use cases to test the embodied reasoning abilities of PaLM-E: affordance prediction, failure detection, and long-horizon planning. The low-level policies are from RT-1 (Brohan et al., 2022), a transformer model that takes RGB image and natural language instruction, and outputs end-effector control commands.

PaLM-E is a next-token predictor, and it’s called “PaLM-E” because it’s based on Google’s existing large language model (LLM) called “PaLM” (which is similar to the technology behind ChatGPT). Google has made PaLM “embodied” by adding sensory information and robotic control.

Since it’s based on a language model, PaLM-E takes continuous observations, like images or sensor data, and encodes them into a sequence of vectors that are the same size as language tokens. This allows the model to “understand” the sensory information in the same way it processes language.

A Google-provided demo video showing a robot guided by PaLM-E following the instruction, “Bring me a green star.” The researchers say the green star “is an object that this robot wasn’t directly exposed to.”

In addition to the RT-1 robotics transformer, PaLM-E draws from Google’s previous work on ViT-22B, a vision transformer model revealed in February. ViT-22B has been trained on various visual tasks, such as image classification, object detection, semantic segmentation, and image captioning.

Google Robotics isn’t the only research group working on robotic control with neural networks. This particular work resembles Microsoft’s recent “ChatGPT for Robotics” paper, which experimented with combining visual data and large language models for robotic control in a similar way.

Robotics aside, Google researchers observed several interesting effects that apparently come from using a large language model as the core of PaLM-E. For one, it exhibits “positive transfer,” which means it can transfer the knowledge and skills it has learned from one task to another, resulting in “significantly higher performance” compared to single-task robot models.

Also, they observed a trend with model scale: “The larger the language model, the more it maintains its language capabilities when training on visual-language and robotics tasks—quantitatively, the 562B PaLM-E model nearly retains all of its language capabilities.”

PaLM-E is the largest VLM reported to date. We observe emergent capabilities like multimodal chain of thought reasoning, and multi-image inference, despite being trained on only single-image prompts. Though not the focus of our work, PaLM-E sets a new SOTA on OK-VQA benchmark. pic.twitter.com/9FHug25tOF

— Danny Driess (@DannyDriess) March 7, 2023

And the researchers claim that PaLM-E exhibits emergent capabilities like multimodal chain-of-thought reasoning (allowing the model to analyze a sequence of inputs that include both language and visual information) and multi-image inference (using multiple images as input to make an inference or prediction) despite being trained on only single-image prompts. In that sense, PaLM-E seems to continue the trend of surprises emerging as deep learning models get more complex over time.

Google researchers plan to explore more applications of PaLM-E for real-world scenarios such as home automation or industrial robotics. And they hope PaLM-E will inspire more research on multimodal reasoning and embodied AI.

“Multimodal” is a buzzword we’ll be hearing more and more as companies reach for artificial general intelligence that will ostensibly be able to perform general tasks like a human.

[ad_2]

Source link